The first non-trivial feature I've done at Facebook is now released 🙂

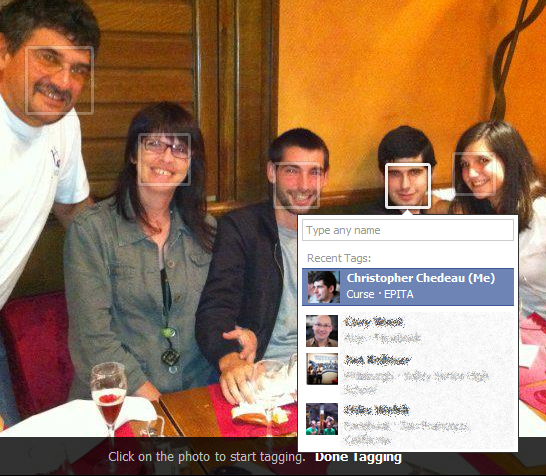

If you have ever tagged an album, you must know the pain it is to go over all the photos and tag everyone. In order to make this process easier, we can make use of image recognition algorithms to find faces.

How it works

When you click enter tagging mode in the photo viewer, all the detected faces are now being displayed. The first face on the left is automatically selected and you are prompted to enter the name of the person. When you press enter, it automatically goes to the next.

In the ideal case, you can just write the first name, press enter, write the second and so on. Then you press the right arrow key to go to the next photo. You don't have to use your mouse anymore!

Difficulties

This project, strangely, was easy to implement. The difficult part was designing the feature to be user friendly.

Fear of Image Recognition Algorithms

First of all, image recognition algorithms can be frightening. The comparison to 1984 is easy to be made. This is a tough issue to deal with and we've tried our best to use these algorithms in a way that is not creepy.

First of all, image recognition algorithms can be frightening. The comparison to 1984 is easy to be made. This is a tough issue to deal with and we've tried our best to use these algorithms in a way that is not creepy.

A typical algorithm has two distinct parts. Detection is the step to find the faces in the image. Recognition is trying to match the face with the person.

Here, we only use detection. We just show boxes where the algorithm thinks there are faces. We do not try to guess who the person is.

Image Recognition Algorithms are Not Perfect

Detecting faces in a picture is an extremely hard problem to solve. The current algorithm works well but is not perfect, and sadly will never be.

The way to deal with it is to make the detected faces a suggestion. The user can at any moment ignore the suggestion and tag anywhere else in the picture. The idea is to make it faster to tag for the most common case, when the algorithm is right. When it is wrong, the user can use the old flow and tag whatever he wants.

From the three months I've been at Facebook, I've seen a strong emphasis to give people control and let them shares things by themselves. Machine learning is used extensively but never to automatically publish things in behalf of the user.

Handling Already Tagged Faces

If the face is already tagged, we don't want to prompt the user to tag the person again. This is tricky to know if a face has already been tagged.

If the face is already tagged, we don't want to prompt the user to tag the person again. This is tricky to know if a face has already been tagged.

In an ideal world, the tags would be placed centered in the face. However users don't always do that. There are a lot of tags in people's body, feet, hands ... Also, since tags trigger a notification and often a mail to the tagged person, users use tags to send an image with someone. The person isn't even in the photo so no heuristic will help in this case.

We only implement a simple heuristic: if the center of the tag is inside the detected face, we hide the tag. This is going to fail in all the mentioned edge cases but it is not a huge issue in practice. Since we are now going to automatically prompt the user to tag on faces, we are educating them to do so.

Also, most of the tags are done within 24 hours after the image has been uploaded. All the old images with weird cases won't be seen often.

Conclusion

This project was really interesting as it did not only involve technical skills but also a lot of design. I've uploaded several albums since I implemented it and found that it made tagging the entire album more efficient and feel less boring.

This feature also increased the number of tags by few percent, which results in millions of additional tags! Working at Facebook scale is crazy 🙂

First of all, image recognition algorithms can be frightening. The comparison to 1984 is easy to be made. This is a tough issue to deal with and we've tried our best to use these algorithms in a way that is not creepy.

First of all, image recognition algorithms can be frightening. The comparison to 1984 is easy to be made. This is a tough issue to deal with and we've tried our best to use these algorithms in a way that is not creepy. If the face is already tagged, we don't want to prompt the user to tag the person again. This is tricky to know if a face has already been tagged.

If the face is already tagged, we don't want to prompt the user to tag the person again. This is tricky to know if a face has already been tagged.